Answer the question

In order to leave comments, you need to log in

2D Discrete Fourier Transform for Image (very big question - lots of text)?

Essence of the issue:

There is a binarized black and white image obtained after several stages of processing the original image using filtering methods in the spatial domain - a unified employee profile - black printed text on a white background and a black and white photograph in the corner of the document. Also, in some places, the background of the document has artifacts - "spots" of gray color - areas of pixels on some part of the image. Artifacts of gray color can be present both on a pure background and serve as a background-substrate in the text area - that is, in its main part - the text on a white background, but some of the letters, words can be on a gray "spot". It is required to filter the image in the frequency domain.

Background and some clarifications (please don't skip):

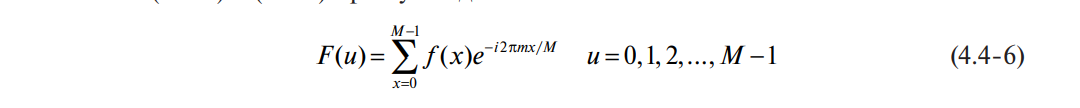

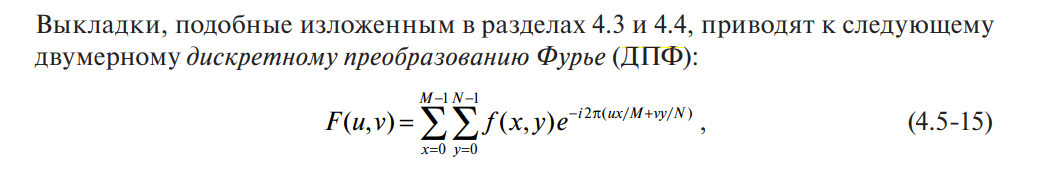

the first stage of frequency filtering will be the transition to the frequency domain using the DFT (I know that for adequate calculations you need to use the FFT, but I will figure it out step by step). I read about the DFT, in fact, as well as about image processing in the book "The World of Digital Image Processing" - S. Gonzalez, R. Woods. Let me just make two points:

/////////ДПФ

double Re = 0, Im = 0, summaRe = 0, summaIm = 0, Ak[512] = {0}, Ak_1[512] = {0}, Arg = 0;

for (int i = 0; i < 512; i++){

summaRe = 0; summaIm = 0;

for (int j = 0; j < 512; j++){

Arg = 2.0*M_PI*j*i/512.0;

Re = cos(Arg)*(massiv[j]);

Im = sin(Arg)*(massiv[j]);

summaRe = summaRe + Re;

summaIm = summaIm + Im;}

Ak[i] = sqrt(summaRe*summaRe + summaIm*summaIm);}

public class FurieTrans {

public List<Complex> koeffs;

public double K;

public FurieTrans() {

koeffs = new List<Complex>(); }

public void dpf(List<Point> points, int count) {

koeffs.Clear();

K = points.Count;

//Цикл вычисления коэффициентов

for(int u=0; u<count; u++)

{ //цикл суммы

Complex summa = new Complex();

for (int k = 0; k < K; k++) {

Complex S = new Complex(points[k].X, points[k].Y);

double koeff = -2 * Math.PI * u * k / K;

Complex e = new Complex(Math.Cos(koeff), Math.Sin(koeff));

summa += (S * e); }

koeffs.Add(summa/K); } }

public List<Complex> undpf() {

List<Complex> res = new List<Complex>();

for(int k=0; k<K-1; k++) {

Complex summa = new Complex();

for (int u = 0; u < koeffs.Count; u++ ) {

double koeff = 2 * Math.PI * u * k / K;

Complex e = new Complex(Math.Cos(koeff), Math.Sin(koeff));

summa+=(koeffs[u]*e); }

res.Add(summa); }

return res; } }

static void MakeFurieTransform(FT_mode mode) {

Bitmap FFT_bitmap = new Bitmap(workBitmap.Width, workBitmap.Height);

double realPart = 0, imagePart = 0, rotAngle = 0, amplitude = 0;

double e = 0;

double summ = 0;

if (mode == FT_mode.direct) {

for (int u = 0; u < workBitmap.Width; u++) {

for (int v = 0; v < workBitmap.Height; v++) {

summ = 0;

//циклы суммы

for (int x = 0; x < workBitmap.Width; x++) {

for (int y = 0; y < workBitmap.Height; y++) {

double Rot_coefficient = -2 * Math.PI * (u * x / workBitmap.Width + v * y / workBitmap.Height);

realPart = Math.Cos(Rot_coefficient);

imagePart = Math.Sin(Rot_coefficient);

e = realPart + imagePart;

summ += workBitmap.GetPixel(x, y).ToArgb() * e; } }

//формируем Фурье-спектр

amplitude = Math.Sqrt(realPart * realPart + imagePart * imagePart);

rotAngle = Math.Atan(imagePart/realPart);

int_R = (int)amplitude;

NormalizePixel(ref int_R);

FFT_bitmap.SetPixel(u, v, Color.FromArgb(int_R, int_R, int_R)); } } }}e = realPart + imagePart;- what to do with the complex part of i near the sine? Those. e^iAngle=cos(Angle)+ i sin(Angle) - what to do with the imaginary part during implementation?amplitude = Math.Sqrt(realPart * realPart + imagePart * imagePart);

rotAngle = Math.Atan(imagePart/realPart);

int_R = (int)amplitude;

NormalizePixel(ref int_R);

FFT_bitmap.SetPixel(u, v, Color.FromArgb(int_R, int_R, int_R));Answer the question

In order to leave comments, you need to log in

Very interesting, nothing is clear.

Where I get the value of e - e = realPart + imagePart; - what to do with the complex part i near the sine? Those. e^iAngle=cos(Angle)+ i sin(Angle) - what to do with the imaginary part during implementation?

I don't quite understand what should serve as the new pixel color value. Am I doing the right thing?

Why did you decide that you need filtering in the frequency domain? It's not at all obvious from your description. What do you want to get as a result?

If you want to experiment with pictures, then there is Imagemagick. This will eliminate the need to write code. https://legacy.imagemagick.org/Usage/fourier/

There are also examples of filtering in the frequency domain, such as typographic raster removal.

PS Gonzalez, Woods is a good book. :-)

Didn't find what you were looking for?

Ask your questionAsk a Question

731 491 924 answers to any question