Answer the question

In order to leave comments, you need to log in

Why doesn't multiprocessing work correctly?

I want to check the proxy for validity + multithreading.

Without multiprocessing, everything works correctly, and with it, not full proxies are issued.

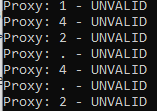

With her:

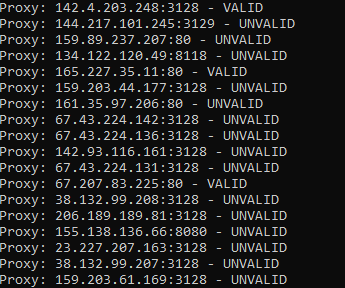

Without her:

def check_prox(proxybase):

for prox in proxybase:

user = fake_useragent.UserAgent().random

proxy = {'http': f'http://{prox}',

'https': f'https://{prox}'}

check_url = 'http://example.com'

try:

requests.get(check_url, proxies=proxy, timeout=3)

except:

print(f'Proxy: {prox} - UNVALID')

continue

else:

print(f'Proxy: {prox} - VALID')

with open('valid_proxy.txt', 'a', encoding='utf-8') as file:

file.write(f'{prox}\n')

with open('proxy.txt', 'r', encoding='utf-8') as file:

check_proxy_base = file.read().split('\n')

if __name__ == '__main__':

pool = Pool(processes=3)

pool.map(check_prox, check_proxy_base)Answer the question

In order to leave comments, you need to log in

Didn't find what you were looking for?

Ask your questionAsk a Question

731 491 924 answers to any question